International Peer Review Expert Panel report

A report to the Governing Council of the Canadian Institutes of Health Research

February 2017

Table of Contents

- Citation

- Acknowledgements

- Declaration of Interests

- Executive Summary

- Preamble

- Scope and Process of the Review

- Context of the Peer Review Expert Panel

- General Comments on the Panel's Perspective and Objectives

- General Observations

- Recommendations

- Conclusion

- References

- Appendix A: Responses to Original Six Mandate Questions

- Appendix B: Peer Review Expert Panel Member Biographies

- Appendix C: List of Materials Provided to the Peer Review Expert Panel

- Appendix D: Agenda, January 16-17, 2017

Citation

Gluckman, P., Ferguson, M., Glover, A., Grant, J., Groves, T., Lauer, M. & Ulfendahl, M. (2017). International Peer Review Expert Panel: A report to the Governing Council of the Canadian Institutes of Health Research.

Acknowledgements

The Panel wishes to place on record its appreciation for the quality and depth of the reports provided by CIHR and the participants that met with the Panel. The Panel wants to emphasize and acknowledge the professionalism with which the CIHR staff, officers and President engaged with the review process. In particular, the Panel acknowledges the contributions of Sarah Viehbeck and David Peckham to supporting the Panel and in the preparation of this report.

Declaration of Interests

At the outset of the Peer Review Expert Panel process and at the face-to-face Panel meetings in January 2017, all Panel members were invited to declare interests and any prior or current association with CIHR.

The Panel was assertive with regards to ensuring its own independence. None of the panel members have had recent association with CIHR. At no stage prior to, during, or after the review was there any attempt by CIHR to direct or influence the Panel's deliberations or recommendations. Further we wish to emphasize that there was absolute unanimity of the seven panel members regarding the commentary and recommendations that follow.

The table below summarizes the interests declared.

| Panel Member | Declared Conflicts and associations with CIHR |

|---|---|

| Sir Peter Gluckman | No conflicts to declare. Sat on the CIHR Institute Advisory Board for the Institute of Human Development, Child and Youth Health, 2001-2003 |

| Mark W. J. Ferguson | No conflicts to declare |

| Dame (Lesley) Anne Glover | No conflicts to declare |

| Jonathan Grant | No conflicts to declare. Collaborated on an international research consortium, which included CIHR, on Project Retrosight – a large scale research impact evaluation in the area of cardiovascular disease – in 2008 |

| Trish Groves | No conflicts to declare |

| Michael Lauer | No conflicts to declare |

| Mats Ulfendahl | No conflicts to declare |

Executive Summary

The mandate of the CIHR is to: "excel, according to internationally accepted standards of scientific excellence, in the creation of new knowledge and its translation into improved health for Canadians, more effective health services and products and a strengthened Canadian health care system." The remit of CIHR extends from discovery science and knowledge creation to knowledge translation. It must span and integrate from biomedical and clinical research to research into health services and systems, and the social, cultural, and environmental determinants of population health. The mandate also requires a commitment to consider the potential translational impact of the research to be funded.

The process of reforming the former Open Operating Grants Program, which had been operating largely using a legacy approach carried over from the former Medical Research Council, into a program that would allow CIHR to address the full scope of its mandate while also reducing the burden on peer reviewers and applicants, was a major undertaking of CIHR from 2009 to the present. Poor operational implementation coupled with a resource-constrained health research funding environment and the introduction of many simultaneous changes at CIHR made these reforms problematic and led to an erosion of trust between CIHR and its stakeholders which must now be rebuilt.

In September 2016, CIHR announced an independent, international review of the design and adjudication processes of CIHR's investigator-initiated programs. This was part of the mandated five yearly cycle of international review at the agency.

The International Peer Review Expert Panel supports the intent behind the reforms to these programs and acknowledges CIHR for displaying vision and innovation in its program design and grant review processes. The design intent and logic of innovation regarding the open grant programs and the process of peer review was sound. It is most unfortunate that there was implementation failure.

Peer review is but one part of grant review and funding allocation. There are strategic dimensions that also influence the grant review process and need to be married to the technical aspects of the peer review process so as to have a world class grant allocation system. Peer review is essentially a subjective process that becomes particularly problematic when funding levels are low; it relies on all stakeholders seeing the process as fair and trustworthy. Some widely-held assumptions regarding the design of peer review are not supported by the evidence.

There are strengths and consequences to every design of peer review and these will need to be considered by CIHR in moving forward. Any peer review system structure has design constraints. Regardless of the final design, the overall objective for the investigator-initiated funding programs at CIHR must be to support the CIHR mandate as stated in the CIHR Act.

Accordingly the Panel's report presents a general framework for the future but leaves it to CIHR working with its stakeholders to address some strategic and operational considerations that would determine a final design. The Panel considers that the current peer review design can be readily evolved to one that is world class and meets the mandate of the CIHR while rebuilding a sense of fairness and trust. It does not consider further retrenchment to the former system to be desirable.

Summary of Recommendations

- We recommend that the Government of Canada increases investment in health research.

- We recommend that the CIHR Act be amended to separate the role of Governing Council Chair from President/CEO (Article 9.1).

- We recommend the appointment of an international advisory board to assist the reform process.

- We recommend that all stakeholders in the Canadian health research system work together to strengthen its impact on the health of Canadians.

- We recommend that CIHR decide on and widely communicate about its investment strategy.

- We recommend that CIHR institute the following best practices for peer review:

- 6.1. Introduce Ph.D. research trained scientific review officers as CIHR staff to support reviewer recruitment and assignment and grant management, and liaison with applicants;

- 6.2. Include more international reviewers to minimize reviewer demand in Canada, decrease possibilities of conflicts of interest and positive or negative bias, and to support CIHR's mandate to "excel, according to internationally accepted standards of scientific excellence…".

- 6.3. Institute a process for applicant response to reviewer comments for applications that survive triage.

- We recommend that CIHR continues to innovate in the way that it undertakes peer review.

Preamble

Canada has a long-standing tradition of demonstrated excellence across the full spectrum of health research. Canada is also well-regarded internationally for pioneering new fields, including evidence-based medicine and knowledge translation.

With the creation of the Canadian Institutes of Health Research (CIHR) through an Act of Parliament in 2000, the Government of Canada set a new vision for health research in the country and for the way it is funded. The mandate of the CIHR is to: "excel, according to internationally accepted standards of scientific excellence, in the creation of new knowledge and its translation into improved health for Canadians, more effective health services and products and a strengthened Canadian health care system."

The remit of CIHR is all-encompassing extending from discovery science and knowledge creation to knowledge translation. It must span and integrate from biomedical and clinical research to encompass research into health services and systems, and social, cultural, and environmental determinants of population health. The mandate also requires a commitment to consider the potential impact of the research to be funded. This expanded and multidimensional scope remains a challenge for CIHR seventeen years after its creation both in terms of encouraging integration across these four pillars and in managing the balance between promoting excellence across the full spectrum of health-related research and driving its impact.

The process of reforming the former Open Operating Grants Program, which had been a legacy approach carried over from the former Medical Research Council, into a program that would allow CIHR to address the full scope of its mandate while also reducing the burden on peer reviewers and applicants, was a major undertaking of CIHR from 2009 to the present. The scope and nature of these reforms, which took place in the context of constrained funding, have been met with mixed reactions from the research community and CIHR's stakeholders, both for the overall design and particularly because of significant problems in its implementation. The subsequent reaction from the research community then exposed a number of other issues which in turn inflamed the community response further and led to further changes in the delivery of the funding programs – these changes remain in progress. As a result, stakeholder 'trust' in the process has been compromised.

The CIHR is required to have an international review process every five years. Because of the current context, CIHR convened this Peer Review Expert Panel [hereafter referred to as 'the Panel'] with a specific mandate to examine the design and adjudication processes of CIHR's investigator-initiated programs in relation to the CIHR mandate, the changing health sciences landscape, international funding agency practices, and the available literature on peer review.

Overall, it is the belief of the Panel that the intent of the reforms as originally envisaged was timely, innovative, and generally in the right direction. The over-burdening of demands on the peer review system is something shared by funders internationally. CIHR was bold and innovative and at the cutting edge-internationally and should be acknowledged for the intent and direction. We hope that the events of the recent past do not inhibit this spirit of innovation.

It was clear to the Panel that there was a huge amount of well-intended effort put into the design of the reforms, including modelling of likely demand and consultation with the research community. However there were a series of significant implementation failures, compounded by ineffective engagement with the research community that undermined confidence in, and execution of, the proposed reforms.

Underlying all of this concern is the state of funding of health research in Canada, which effectively became more constrained during this reform process.

Following a review of extensive materials, reports, submissions, and meetings with stakeholders, the Panel, without the benefit of hindsight, considers it likely that we would have endorsed the fundamentals and the intent of the original design. We believe that, if implemented properly, the design could have led to an innovative and effective system for allocating research funding with two provisos: firstly, the need for more extensive use of international reviewers, and second the employment of scientific review officers at CIHR to manage the relationship between the agency and the research community.

The Panel considered its role was not to undertake a detailed post-mortem and focus on the operational failures, but rather to take a forward-looking view in its deliberations to assist CIHR towards a functional, trustworthy, and effective grant award system that reflects its mandate and can build from where it is now. It emphasizes that the path forward will need to be a collective enterprise involving not just CIHR but other parts of the research ecosystem, including particularly the broad research community; both its institutions and individuals. It will be essential to focus on rebuilding trust between CIHR and the Canadian health research community and stakeholders, and some of our recommendations focus specifically on assisting that aspect.

Scope and Process of the Review

In September 2016, CIHR announced an independent, international review of the design and adjudication processes of CIHR's investigator-initiated programs. This was part of the mandated five yearly cycle of international review at the agency. Specifically, the Panel was asked to address the following questions:

- Does the design of CIHR's reforms of investigator-initiated programs and peer review processes address their original objectives?

- Do the changes in program architecture and peer review allow CIHR to address the challenges posed by the breadth of its mandate, the evolving nature of science, and the growth of interdisciplinary research?

- What challenges in adjudication of applications for funding have been identified by public funding agencies internationally and in the literature on peer review and how do CIHR's reforms address these?

- Are the mechanisms set up by CIHR, including but not limited to the College of Reviewers, appropriate and sufficient to ensure peer review quality and impacts?

- What are international best practices in peer review that should be considered by CIHR to enhance quality and efficiency of its systems?

- What are the leading indicators and methods through which CIHR could evaluate the quality and efficiency of its peer review systems going forward?

Following review of the provided material and in response to feedback from the stakeholders, the Panel requested that its mandate was broadened: this was agreed with the Vice-Chair of the Governing Council and the CIHR President and the Panel was invited to make observations about CIHR more generally. It was not possible for the Panel to fully disentangle issues of governance, management and the funding environment from the issues of peer review and program design, thus the Panel felt it was necessary to also comment on the role of these matters where relevant.

It should be noted that the peer review of Indigenous Health research grants was out of scope for the present Panel. This is due to a parallel process undertaken by CIHR in partnership with Indigenous Health researchers and representatives from Indigenous communities. The Peer Review Expert Panel commends CIHR and the Reference Group on Appropriate Review Practices for Indigenous Health Research for their work to design and implement innovative processes to conduct culturally appropriate peer review of Indigenous Health Research.

The Panel also did not make any consideration of, nor assess in any way, those programs of CIHR which are not funded through either the Foundation Grant or Project Grant programs. We note that the collective investment across provincial funders equates to approximately 50% of the level of funding at CIHR (~$500M), however an examination of provincial health research funding support and how it relates to CIHR was also outside of the scope.

The Panel acknowledges that this review took place very early in the implementation of a number of changes and thus the review is being conducted against limited information as to the effect of the changes. Nevertheless the panel feels confident that it is able to make substantive recommendations that could assist CIHR evolving further in accord with its own mandate. As a result the Panel now recommends further restructuring to address problems that have been identified, to continue the direction and intent of the reforms, and to rebuild trust in the grant allocative process. As such, the Panel recognizes that the reforms require ongoing monitoring and evaluation.

The Panel received three extensive background documentation packages from CIHR in October, December, and January. Together with papers provided by those who presented to the panel, the briefing papers were approximately 1000 pages in length. A full list of all documentation provided to the Panel is in Appendix C. In brief, the Panel received:

- Detailed background information on the design and implementation of the reforms;

- Reports of CIHR funding results, reviewer and applicant satisfaction surveys, and audits of the implementation of the reforms;

- Two commissioned reports from independent consultants – a bibliometric analysis (conducted by l'Observatoire des Sciences et des Technologies) and a literature review of best practices in peer review (conducted by RAND Europe);

- Submissions of stakeholder feedback collected through the CIHR website and by the CIHR Institutes, briefs submitted by organizations, and letters previously submitted about the reforms (e.g., the Open Letter to the Minister of Health from June 2016).

- Reports and slide packs by CIHR staff and those invited to meet the panel.

The Panel wishes to place on record its appreciation for the quality and depth of the reports provided, and the rapid response of the CIHR staff for all requests for information. All data including additional analyses that the panel requested from the CIHR were readily provided. The Panel wants to emphasize and acknowledge the professionalism with which the CIHR staff, officers and President engaged with the review process. The panel was also most impressed with the obvious depth of thought, commitment, honesty and passion that went into the submissions it received from stakeholders.

On January 16-18, 2017, the Panel held face-to-face meetings in Ottawa and used the opportunity to meet with CIHR executives, representatives from the research community, and representatives from other key CIHR stakeholder organizations and to draft the report. The agenda is in Appendix D.

These meetings and the submissions that the Panel received made clear that there is a collective commitment by all stakeholders to improving CIHR's processes and funding for investigator-initiated research. This includes CIHR Governing Council and leadership, researchers, provincial agencies, universities, health care centres, and hospitals. The Panel believes that this passion needs to be harnessed and translated into a common vision and goal for health research in Canada.

Given this, we begin our report setting out the context for the Peer Review Expert Panel and making some general observations on CIHR and the peer review and grant allocation system at CIHR for investigator-initiated research. Building on these high level comments we then explore the purpose of grant review and apply that analysis to the concept, design, and implementation of the reforms at CIHR. Taking those lessons we put forward a generic model for CIHR to consider, based on a set of principles for peer review. We finish with some concluding comments and a set of recommendations. In the appendices we set out answers to the six questions the Panel was asked to address, details of Panel membership, a list of materials that were provided to the Panel as part of this review, and the Agenda for our meetings in January 2017.

Context of the Peer Review Expert Panel

Since the release of the 2009 Health Research Roadmap and indeed since the 2011 International Review of CIHR, the organization has been impacted by a series of significant and simultaneous changes. These changes have been both internal and external to CIHR, including:

- The essential flat-lining of the CIHR budget after a prior period of growth;

- The 2009 decision by the Social Sciences and Humanities Research Council (SSHRC) that health-related research would no longer be eligible for support from SSHRC;

- Sun-setting of some programs to shift into the new open suite (e.g., knowledge translation programs; commercialization program);

- The Institute modernization process (e.g., reduction in Institute Advisory Boards and a shift in the funding role of Institutes); and,

- The parallel introduction of both the Foundation and Project Grant Programs and related peer review processes.

It is also possible that there may be some legacy issues within the research community related to the expanded mission of the CIHR compared to that of the previous Medical Research Council when the CIHR Act was passed in 2000. These attitudes may have been aggravated given a series of government investments for targeted programs (such as the Strategy for Patient-Oriented Research (SPOR)) without any concurrent increases in the open grant programs.

This context made it a challenge to identify where issues were specific to peer review, the CIHR reforms more generally, the other changes happening in parallel at CIHR, the CIHR mandate, or whether some issues were primarily a manifestation of the broader context of constrained health research funding. There is no doubt that the implementation of changes to the peer review system changes at CIHR was highly problematic. In particular the over-reliance on and then the failure of the matching algorithm started a series of cascading implementation issues. But the response to these issues became conflated with these broader concerns about the general state of health research in Canada and other issues in the reform process and escalated the tensions and exposed a sense of crisis in the Canadian health research community.

It is the Panel's assessment that the confluence of factors that created such a "perfect storm" led to a situation where a correlation between the CIHR reforms and the current state of the health research enterprise has been treated as causation. Without in any way diminishing the very justified concerns about the problems and failures in the implementation of the new review system, if the underlying issues, and in particular the mismatch between mandate and funding levels, are not addressed, then the health research enterprise in Canada will not meet its remarkable potential and promise.

The Panel also recognizes that their work is occurring at the same time as the Government of Canada's Fundamental Science Review and the Panel is hopeful that the Fundamental Science Review will attend to some of the broader structural issues which are impacting the health research enterprise, including budget and cross-funding Council collaboration.

General Comments on the Panel's Perspective and Objectives

We emphasize from the outset that peer review is but one part, albeit a core component, of the process of grant review and the consequent decisions to allocate research funding. In making its decisions regarding funding allocations, the CIHR has a broad range of considerations in meeting its responsibilities and mandate. Other considerations include the overall strategic intent of the Government on behalf of the citizens of Canada, the strategic priorities set by the Governing Council of CIHR, the balance of funding allocation tools used (e.g., the mix of priority-driven and investigator-initiated research; the prioritization of the type of research or researcher) and other factors that go beyond the assessment of research excellence in deciding which applications to fund such as impact or addressing research gaps. Peer review is primarily focused on that component of the process which is defining research excellence.

Funding agencies globally increasingly take these broader strategic considerations into account in designing their grant review process. Further there is no singular "gold standard' process of grant and thus peer review. Many agencies, including CIHR, have multiple processes designed according to particular program goals so as to reflect the range of obligations within their mission. To be successful, the peer review processes of any granting council must be perceived as fair by all key stakeholders, the funder, its staff, the research community and their institutions.

Peer review is a subjective assessment that at best can be pseudo-objective.

The Panel makes its comments from this perspective and with the goal of being constructive and forward-looking. At the heart of all its commentary and associated recommendations, the Panel identified four goals which it considered to be of primary importance to the present review:

- To assist CIHR to meet its obligations in accord with its Act and the purposes defined in clause 1 of the CIHR Act;

- To ensure a strategic alignment and understanding of the competitive funding programs (Foundation and Project Grant Programs) of the CIHR portfolio;

- To ensure that the grant review process and the peer review component of that process are best practice and perceived by key stakeholders including the research community as being fair, robust and trustworthy; and,

- To rebuild trust in CIHR.

General Observations

CIHR

The following general observations made by the Panel relate to the ability of CIHR to effectively implement changes to their investigator-initiated research programs and grant review system.

- CIHR is not solely responsible for the health research system in Canada. Many of the challenges raised by stakeholders relate to systems issues. The way forward for CIHR needs to be grounded in its mandate to improve the health of Canadians and to serve Canadians as a public agency. Partners, stakeholders, institutions (universities; academic health sciences centres) all have a role in supporting the system and should own their role in the enterprise. We noted that some stakeholders had not fully recognized that they too have a critical role to play.

Constrained health research funding environment in Canada. The flatlining budget in CIHR since 2010Footnote 1 in combination with increasing application pressure has meant an effective decline in available funding. Earlier increases in funding plus the growth of the tertiary sector and the innovation-focused economyFootnote 2 had meant that the health research community had being growing in size. Experience from elsewhereFootnote 3 demonstrates that rapid growth followed by leveling out of funding typically results in effects of creating greater pressure, especially as younger investigators trained and encouraged during the growth phase come to enter the independent-investigator pool.

At the same time, the potential of health research both to improve the health of the Canadian population and to impact positively on the innovation economy has grown, and this has created cost pressures as the range of tools, approaches, and questions has expanded. It has also led to a broader range of investigator classes such as engineers, data scientists, social scientists, policy and evaluation scientists, and humanities researchers having a major role to play in health related research and turning to the CIHR for support. This was further exacerbated in 2009 by the SSHRC decision to change their eligibility criteria related to health research.

The simple reality is that the absolute funding levels available for health-related research in Canada remain a major factor in the tensions existing within the research community and has created difficulties for CIHR (e.g., with respect to the balance between Foundation and Project Grant Programs). The net effect of all these factors has resulted in a declining success rate in CIHR's investigator-initiated programs. The data suggest that it is the funding level rather than the system that was evolved that led to considerable interruptions in the funding of many established research programs. The design of the grant review reforms was apparently conceived under the assumption of funding increases to the CIHR investigator-initiated programs. This did not materialize through the period of implementation and impacted the ability to deliver.

We also note that the provincial health research organizations are significant funders of some components of health research and that this situation has put them under increasing pressure.

- Fragmentation of the research community. The funding situation described above has fostered a climate where stakeholders within the health research communities from across CIHR's constituencies are somewhat fragmented. The performance of the research system can only be enhanced by a more collective and aligned approach and recognition that all must work actively together for a stronger, inclusive and impactful health research environment extending from discovery research to evaluation research. It requires the continued enhancement of the integration of new and old disciplines, both new and old, including those within the biomedical and engineering sciences, the social sciences and humanities.

- Foundation and Project Grant Programs. The concurrent introduction of these two programs was logical. They have distinct and important intent and there are comparable schemes in many jurisdictions. But introducing these two programs at the same time as changing the peer review process itself put added pressure on the entire system: it effectively increased the number of applications as individuals submitted into both programs and this also contributed to the implementation failures. Unfortunately it also appeared to have created a sense of competitive tension between the two programs in the minds of some within the research community. In retrospect, had the Foundation Grant program been introduced with a lesser number of awards and with a slower build up, it may have reduced this tension and assisted in the transition. While there were efforts to pilot aspects of the new programs, similarly designed programsFootnote 4 elsewhere have been implemented at slower rates than at CIHR.

Lack of a shared understanding and clear strategy. It is the Governing Council of CIHR that has the responsibility to set strategy for CIHR. While CIHR has a strategic plan, the overall strategic directions for the funding allocation in the reforms and the balance of the portfolio were neither well-expressed nor well-understood either internally or externally. The reforms and related expenditures did not seem to be linked to an overall explicit, investment strategy.

As will be discussed later in this report, there are a number of strategic elements to grant review that will need to be explicitly determined by CIHR as it moves ahead (e.g., the balance between assessing excellence and impact; the allocation of dedicated funds to early career researchers). These must be well-communicated to all stakeholders.

- Fundamental failure of implementation. Despite the good work put into the design of the reformed peer review system and grant funding structure, it is indisputably the case that CIHR failed in its duty to effectively implement these changes. These implementation failures included: the failure to effectively pilot the applicant-to-reviewer matching algorithm; failure to have in place the College of Reviewers at the outset of the reforms; failure to effectively engage the research community throughout the reforms; and failure to maintain the trust and confidence of CIHR's main stakeholders, the research community and Canadians, as represented through politicians. We are not qualified to comment on the root cause of these failures, but wonder whether the change management capacity and capabilities of CIHR was adequate to deliver the reforms as designed. We emphasize that these were operational not outcome failures, the design intent was appropriate, and the outcomes of the competitions that followed did not lead to inappropriate shifts in the portfolio of investigators funded. The CIHR has continued to invest taxpayer money wisely and appropriately through these programs.

Meaningful consultation processes. There were many allegations of insufficient consultations on the reforms to CIHR's investigator-initiated programs. However, this Panel observes that there appeared to be many engagement activities over a long period of time and was impressed with the number of events and efforts made. This disconnect suggests that the context of the consultation was not ideal and effective dialogue was not achieved or expectations were unrealistic. The lack of strategic clarity, together with the broader contextual issues discussed above, both likely contributed to the sense of inadequate dialogue.

"On the fly" design changes to the programs in response to community feedback during the summer of 2016 may have further stoked problems rather than helping them, as confusion was created in the community. Effective consultation is extremely difficult when there is a loss of trust.

Lack of transparency. We heard from stakeholders that there were expectations for greater 'transparency'. What this actually means to stakeholders was less clear, however many called for greater access to data. The CIHR has a very extensive website and many data are available. It is good practice internationally to share data on grant success rates by sex, stage of career, etc. CIHR is doing this and should continue to do so.Footnote 5 Further, CIHR should consider sharing data on the proportion of grants that are fundable but not funded, as a strategy to distinguish between what is a peer review issue and what is a budget issue.

The data it releases regarding successful funding applications is generally similar to that which other agencies around the world release. While the Panel heard requests to release more extensive data with specific details on unsuccessful applications, we believe this is unrealistic. We were not aware of any comparable agency that does so and there are many cogent reasons why this is not the case. On the other hand other agencies will, subject to appropriate safeguards, permit research analysis of all grants in the form of a formal research proposal. There is no reason why CIHR could not do so and the NIH Data Book may offer an example to follow.Footnote 6

- Breakdown of trust. It is the belief of the Panel that the factors above, as well as the implementation problems in peer review, have all contributed to a significant breakdown of trust between CIHR, its stakeholders, and in particular from some members of its research community.

Governance of CIHR. The governance model outlined in the CIHR Act, where the President is also the Chair of the Governing Council, is not seen by this Panel as an appropriate model: it is not aligned with international best practice for funding agency governance.

While noting this matter, the Panel was impressed with the professionalism with which the President and the Vice-Chair attempted to address this issue with the Vice-Chair acting as chair of Council when appropriate and the President leaving the Council meeting during appropriate in camera sessions. The Panel is confident that the President and Vice-Chair acted with absolute integrity in this regard. But the Act itself is not in accord with good practice with regards to separating governance and management functions. When trust is under pressure perception can be as important as reality.

In dealing with change management and risk mitigation it is absolutely essential that there are good governance structures and procedures and independent oversight of management. While it was not our role to evaluate the history of the problems that emerged, we suspect that the issues that emerged might have been partially mitigated by clearer separation of governance and management and more independence of the Governing Council.

The Grant and Peer Review System at CIHR

Research funding allocation

Few, if any, countries can afford to do everything that a research community might want to do. Therefore choices need to be made and these must be informed by a prioritization strategy. At the highest level this is set by a Government in its decisions about how much to spend on health research vis à vis other forms of research and other domains of government expenditure. It may make further decisions by way of direction to the funding agency either in legislation or through other constitutional methods such as ministerial letters of expectation. The funding agency itself then has some core prioritization strategies to decide. In its governance role, it must ensure the appropriate balance between basic and applied science, acknowledging that the political perspective on the former may require strong advocacy by the agency to protect basic science. Amongst those strategic decisions and implied within the CIHR Act is that of the balance between excellence and impact/relevance.

No one would debate the importance of the assessment of excellence, but there are multiple criteria of and perspectives on defining excellence. These include: the clarity of question, methodology, statistical design where relevant, stakeholder engagement where relevant (for example in community based research), and the quality of the researcher and research team and infrastructure. Classic peer review by domain experts is designed largely to determine the core research elements of excellence.

Beyond the assessment of research excellence, increasingly countries are requiring that their research agencies give greater weight to the assessment of relevance and impact. Impact is more than simply direct economic or health impact. Indeed basic science can be highly impactful and is a core part of the research mission. If impact is to be assessed there needs to be a clear and transparent taxonomy of impact.Footnote 7 It is important to have a broad and inclusive concept of impact – it can extend from impact on the research capacities of a country through training or methodology development to new fundamental insights to translational impacts on economics, society and health and even to diplomatic considerations. This is distinctive to the traditional academic concept of impact as defined bibliometrically. Funding agencies internationally are increasingly considering how to define and evaluate impact, and some now evaluate impact through a distinctive process to that of excellence evaluation. The policy issue for the agency is then how to combine these assessments so as to decide who to fund.

Given the goal of CIHR is to achieve impact through research across a very broad mandate; we consider that CIHR must have clarity in designing its grant allocation process as to this question.

A narrow definition of what is considered a "peer" in the minds of many (i.e., having comparable and very specific domain expertise) creates an unnecessary tension between scientific excellence and impact and can limit the ability to assess the latter. The embedded practice at CIHR for the peer review score or rank to effectively entirely drive funding decisions further fuels this tension and, in a low success rate environment, this becomes challenging. The CIHR Act requires that CIHR consider both excellence and impact given the agency mandate for knowledge translation to "improve the health of Canadians, more effective health services and products and a strengthened Canadian health care system". How excellence and impact are weighted and defined and how they are assessed will determine design features of a grant review process.

Notwithstanding these comments, poor research is a waste of money and so quality must always be a first filter against which any other criteria are applied.

Peer review is one, but not the only, input to grant review and funding allocation

There are many dimensions to funding allocation beyond peer review, from the macro such as Government directions for science and innovation, to meso such as organizational mandate and strategic priorities, to micro such as reviewer selection and assignment and review criteria. The Panel observes that CIHR and the research community seems to have ascribed a boundary to investigator-initiated grant review that is limited to peer review by subject matter experts in the topic of a grant. If a broader view of grant review were adopted, the system could for example and where appropriate include a review by a subject peer, review by an end user, citizens or patient review, or a methodological review, impact review, etc.

The purpose of peer review

The primary purpose of peer review is to provide an expert input into the grant allocation process. However, it was clear from some stakeholders that there is a wide expectation within the Canadian research community that it has a second purpose - namely capacity and capability building. There was an expectation of having reviewers provide extensive feedback to the unsuccessful applicant such that the applicant will be able to modify the application and feed it back into the system with a greater expectation of funding. In the extreme, recurrent repetition can lead to assessing committees effectively rewriting the grant. This is one reason that has led some agencies to increasing restrictions on resubmissions.

This educational function of peer review, if it is central, has immense design implications: it puts a large burden on reviewers, it can discourage them from participating and it can bias the system. High reviewer burden contributes to declining invitations to review. This educational purpose must be a secondary, not a primary purpose, of grant review and an increasing number of other granting systems have reduced the expectations on review committees in this regard. Some systems now give minimal or one line feedback, especially to grant applications that do not pass the triage stage. Other agencies still offer extensive feedback. Many agencies limit the number of times a grant can be resubmitted and some do not permit resubmission of any grants below a certain quality threshold.Footnote 8

The panel, in accord with growing practice internationally, is of the view that the primary responsibility for the development of researchers and their proposals lies with their host institutions not with CIHR.

Some institutions already have quality assurance and scientific support mechanisms in place to improve grant quality prior to submission and this is to be encouraged.

Ultimately how a grant review system is designed relates very closely to this matter of the nature and degree of intended feedback. Effectively this is a strategic and operational decision about the objectives of grant review. Some feedback from the peer review system is desirable primarily for the purposes of transparency particularly for those grants that are assessed as suitable for funding but are not funded because of funding limitations. Notwithstanding these comments, even if the primary objective of grant review is to allocate funding, processes to enhance feedback to applicants may engender trust in the system.

There are limitations to peer review and peer review models

In spite of the strong faith in peer review by stakeholders and by CIHR, it is widely agreed that there is no "perfect" peer review system globally. Peer review is a human undertaking and is more of an art, than a science. It is ultimately a subjective process. Peer review only works if those engaged with the system (applicants, reviewers, and funders) believe that it is fair and appropriate.

Peer review of grants is effective in determining what is clearly supportable and what is clearly not, but this distinction is qualitative and is distinct from the definition of what is funded or not, which is determined in large part by the available funding. However in general most grant systems find more applications to be fundable than they have money to allocate. But unfortunately peer review of grants is less effective at establishing the percentile (i.e., rank order) of what should be funded within the fundable pool of grants (i.e., grey zone). When the rank order becomes disproportionately important, such as in a context with success rates below (say) 20%, the effectiveness of peer review in predicting future productivity goes down (Fang et al 2016; Doyle et al 2015; Lauer et al 2015a; Lauer et al 2015b).

The Panel congratulates CIHR on commissioning a major literature review of and commentary on peer review processes by the RAND Europe in advance of our review. This was made available to the review committee. Beyond that, the members of the Panel have considerable experience with a number of well-regarded international research granting organizations. As that review points out, the available research literature on grant review is limited. There is an irony in this fact that research into the allocation of funds to research is extremely limited and this has led to tradition, anecdote, and even mythology affecting investigators' attitudes to any peer review system.

Peer review processes

We heard much about the importance of face-to-face review. There appears to be a strong belief in the minds of many researchers that face-to-face peer review confers benefits such as greater accountability of reviewers to deliver high quality reviews and also reviewer benefits related to networking, mentorship, capacity building, etc. The Panel believes the asserted benefits of face-to-face peer review are overstated. That accountability can be achieved in multiple ways beyond face-to-face meetings; for example by electronic interaction; by two stage reviews with different reviewers; or, by allowing applicants to respond to reviewers' comments. Secondary considerations related to networking should be the role of institutions, academic organizations, and/or professional associations.

But it is not even clear from the literature that discussion between reviewers increases the reliability of scores (Fogelholm et al 2012; Mayo et al 2006). Further, there are only two studies that have evaluated virtual peer review by teleconference and through the use of Second Life, a virtual world (Pier et al, 2015; Gallo et al 2013). Pier et al. (2015) set up one videoconference and three face-to-face panels modelled on NIH review procedures, concluding that scoring was similar between face-to-face and videoconference panels but noting that all participants reported a preference for face-to-face arrangements. Gallo et al. (2013) examined four years of peer review discussions, two years face-to-face and two years teleconferencing. They found minimal differences in merit score distribution, inter-rater reliability or reviewer demographics; however, they did find some differences in discussion time. They also noted that panel discussion, of any type, only affects the funding decision for around 10% of applications relative to original scores, and that panel revisions of scores are generally reductions. In sum the difference between outcomes of face-to-face discussions and teleconferences was minimal.

Evidence also suggests that peer review in its traditional face-to-face format is subject to biases based on individual characteristics (e.g., Jang et al 2016; Tamblyn et al 2016; Kaatz et al 2014), and that decision-making can become conservative (Boudreau et al 2012; 2016) and subject to group dynamics (Olbrecht et al, 2010) with just the few individuals considered the most 'competent' in a particular topic often leading the decision making process (Luukkonen et al 2012). Whether these shortcomings would be addressed by a virtual format is as yet untested.

Undeclared conflicts of interest can remain despite the efforts of funders to address them - looking at American Institute of Biological Sciences panels, which rely on self-reporting to identify conflicts of interest, Gallo et al (2016) found that overall a third of reviewers had at least one conflict on a peer review committee. However only 35% of conflicts were self-reported, manual checking identified the other 65%.

One of the important design changes made by CIHR was a shift away from relatively stable assessment committees. The panel agrees with this intent. There are disadvantages to such standing committees: for example stable assessment committees allow individuals to write the grant for known reviewers or there can be systematic biases within the committee that persist over several rounds. Committees also have their own dynamics that can create positive or negative biases. There can be dangers in 'groupthink' and there is the potential for a strong personality to hijack the discussion.Footnote 9

The limited use of international reviewers

The Panel observed that CIHR makes very limited use of international reviewers – typically around 10% of reviewers for its investigator-initiated programs are based at institutions outside of Canada. Further, there were surprising and concerning (to the Panel) differences in views across stakeholders about the value and feasibility of engaging international reviewers in the CIHR's processes. It is the firm view of the Panel that the increased use of international reviewers could add considerable value to the Canadian system by: providing an increased pool of reviewers from whom to draw (increased number, increased access to expertise, greater access to francophone reviewers); positioning the evaluation of Canadian science against world-class benchmarks; and limiting the risks of actual or perceived conflicts of interests and cronyism – these latter perceptions persisted as a significant concern for some stakeholders until the recent changes and were part of CIHR's consideration in moving away from standing assessment panels. These issues of unconscious or actual committee biases and conflicts are factors that are increasingly guiding restructuring of grant award systems globally.Footnote 10

Considerations for CIHR Grant Review Processes: Moving Forward

Clarity of objectives of grant review

CIHR will need to set a more explicit strategic direction for its objectives of grant review (e.g., allocation vs. capacity building; excellence vs. impact) and clearly communicate these to the research community. This decision may result in CIHR developing differing grant review criteria for different classes of research as indeed is now the case for Foundation Grants versus Project Grant Programs. This will help to manage the expectations of the community regarding grant review.

There remains a diversity of approaches to addressing these core issues related to grant review. For example, Science Foundation Ireland has distinct panels to adjudicate on excellence and impact (first deciding the excellent applications and then placing these in rank order by impact). The Howard Hughes Foundation provides minimal feedback in general, and many agencies such as the Swedish Research Council give little or no feedback to triaged applications. There is a disappointing amount of high quality research into these issues, and CIHR must be congratulated for being willing to be innovative and evolutionary in its approach. There are lessons here for all funding agencies.

These strategic objectives become important in designing the latter components of a grant review process. We particularly suggest CIHR clarify its expectations regarding excellence and impact and regarding feedback. Our suggestions later in this report reflect the increasing practice developing in other jurisdictions.

General model for grant review

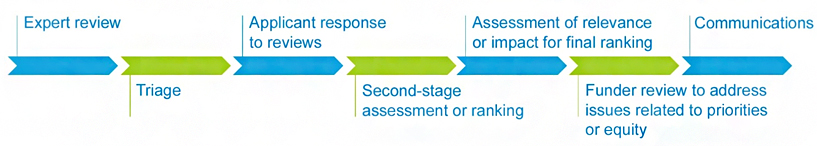

There are many ways to conduct peer review and much variation internationally in how this is done, but the following is a general schema:

- Expert review: Following submission of the grant (depending on the system this may be a full grant or a short form application); subject to expert peer review often electronically.

- Triage: This initial review leads to the triage of some grants not to be considered further;

- Applicant response to reviews: In many systems applicants who move to the next stage are given the opportunity to respond to reviewer comments by submitting an applicant response;

- Second-stage assessment or ranking: A further assessment, often by a committee or Panel, taking into account the applicant's response (if available) and identification and/or ranking of scientifically excellent, potentially fundable grants;

- Assessment of relevance or impact for final ranking: An assessment of relevance or impact (which may be combined with stage 4) to produce a final ranking;

- Funder review to address issues related to priorities or equity: A review by the funding council to include any special considerations (e.g., priority or equity issues) that may go beyond the ranking process before award decisions are finally taken by the Board of the funding council on the recommendation of the Executive.

- Communication: Communication of the results of the grant competition is increasingly detailed often including a list of the fundable grants (funded and sometimes the reserve list) together with success metrics appropriately broken down with respect to gender, early career researchers, ethnicity, etc.

There are many decisions to be made about how each of these phases are operationalized (e.g., face-to-face or virtually; shorter applications in advance of the triage phase vs. full application; whether reviews and/or reviewers get carried forward across stages), but all work toward a point that synthesizes the reviews of the application into a decision to fund or not based on several considerations, including the strategic objectives of the funder.

There are different strategic reasons and cost implications for how each of these phases are implemented. Whatever approach is taken creates constraints. Other considerations such as capacity and capability building within the system, cost, efficiency, etc. also affect the final design choice.

When making these choices, the organization needs to consider the extent to which the design will engender a belief in the trust and fairness of the system by stakeholders – applicants, reviewers, the funder, and government on behalf of the public – and how the design supports the objectives of grant review set by the organization. Importantly, the system should work to the timetable that is provided in advance as research funding decisions have major implications for individuals in their career paths.

CIHR has tried to have a unitary system with Foundation and Project Grants that worked across all stages of careers, disciplines, and pillars. CIHR needs to decide how best to allocate funding and give guidance to its reviewers and competition chairs to ensure appropriate review of applications, given differences in how excellence is assessed across these dimensions. We particularly suggest that this matters with regard to first-time applicants to CIHR.

Some stakeholders from the social sciences and humanities expressed concern with the ability of many reviewers within the CIHR community to review their proposals and asserted that this expertise rests primarily with SSHRC reviewers. It may be that closer collaboration with SSHRC could assist in identifying reviewers for CIHR.

Further, support for multidisciplinary research has significant implications for the design and implementation of peer review. Assessing such research is a challenge for funders internationally and is not limited to Canada. There are different approaches: agency interventions to support multidisciplinary research, multidisciplinary and expanded panels, etc. The Foundation Grant Program is essentially single-investigator focused and may not be the best approach to supporting long-term multidisciplinary research, but the system could be readily modified to do so.

Within the suite of programs that we were asked to consider, we noted a tension between the Foundation and Project Grant Programs. It is the view of the Panel that, had implementation gone more smoothly and been accompanied with extra budget, there may not be so many concerns from the research community about the two-track design.

Having said that, there remains an ongoing discussion among some funders internationally between the priority being given to investigator-focused grants such as Foundation Grants, which are designed not to constrain the applicant, and funding programs which are more project-focused. The logic and interest in the former is partially around reducing applicant burden for excellent researchers, but also to hopefully advance the likelihood of funding more innovative and disruptive research if the applicant, rather than the project, is the focus of the grant evaluation. In contrast, project grants that are more intellectually conservative may be more likely to succeed in competition, particularly in underfunded systems. This is a matter where CIHR needs to find the appropriate balance between its programs.

We applaud CIHR for moving to identify and introduce a person-focused mechanism while maintaining a second project-focused mechanism. However its introduction was conflated with other implementation issues and in a flat-lined funding environment, there was a perception created of taking resources from many to better fund a 'privileged' few investigators (even if justified on quality and/or seniority) for longer periods. It may be that the Foundation Grant numbers needed to be and will need to be more greatly constrained until additional funding is available. The allocation of 45% of the investigator-initiated budget to this program is viewed by the Panel as ambitious and too high at this stage and in the context of available funding.

Preliminary modelling of implementation of the new grant system and its process components was done by CIHR. However, delays and problems in implementation and subsequent "course corrections" resulted in program delivery not matching the modelling, in part because the underpinning assumptions made during design proved to be incorrect. In particular, the understandable behaviour of some applicants in applying in parallel to both programs and the cancelation of a funding round led to problems cascading beyond the parameters of the prior planning (e.g., many more applications being received than expected which in turn strained the reviewer allocation systems).

The initial experience of these Programs has revealed that the intended distinctions between the two forms of grants may not have materialized. The Programs are too close in duration (grants of 4 to 5 years and in some cases longer for Project Grants; 5 or 7 years for Foundation Grants depending on stage of career) and the decision to remove the application cap (and then reinstate it to a maximum of two Project Grant applications) or limit the ability to apply to both programs in response to community feedback have made the distinctions ever blurrier. The only real distinction is the initial person-focused preliminary application phase in the Foundation Grant process.

Timing of grant rounds

The CIHR process has been designed on the basis that one competition must finish before another starts. This creates major design constraints. Many funding systems take longer than 6 months from submission to award. Thus some only have one application per yearFootnote 11 while others have overlapping competitions and boundary rules (e.g., applicants cannot submit a grant currently under consideration to a new competition round).Footnote 12

The reason for multiple competitions a year is largely related to managing the number of applications per round. We strongly recommend that, while continuing with two Project competitions per year, CIHR does not feel constrained to complete one competition before starting the next. Our design considerations and in particular the recommended introduction of a response step, reflect this belief. If this were to occur, it requires that applicants are not permitted to submit the same application to any successive round whilst the original is still under evaluation.

Selection and matching of reviewers

The Panel received submissions and heard repeatedly from the research community that there was a specific and perhaps somewhat limited expectation of what a "peer" is in peer review. In general, we heard the expectation that there should be very precise matching of expertise between applicant and reviewer. This Panel and international experience (e.g., RAND Europe review) would strongly argue that not all reviewers need to have tightly-matched domain-specific expertise and that there is considerable value in reviewers who are experienced investigators with broader and more generally relevant experience. Some reviewers for an application must have sufficiently close expertise to evaluate matters such as methodological and statistical issues or claims of novelty or innovation within the field, but reviewers with broader experience and insights may be better placed to put the proposed work in perspective and get beyond reductionist detail. Indeed there is some evidence that reviewers who are too close to a subject area may create positive or negative bias and thus not result in a fairer review (e.g., Gallo, 2016). Even within disciplines there are good and poor reviewers: just because someone is an outstanding scientist does not necessarily mean they are an outstanding reviewer.

This matter of definition of what constitutes an appropriate grant reviewer has direct implications for designing effective and appropriate approaches to reviewer selection and the matching of reviewers to applications. The Panel heard about a number of issues related to reviewer matching to applications, including the use of software and a matching algorithm that was not fit-for-purpose and insufficient human engagement, particularly in the first round of the Project Grant Program. We further heard that the human engagement in reviewer selection was also restricted because of legal difficulties in what data they could access from the Common CV (this has been addressed nowFootnote 13).

As noted previously, the Panel believes that there are too few international reviewers within the CIHR system. If Canada wants to be world leading, it needs to bring more international experts into the system. If this is done, then it is unlikely for there to be any incentive for international reviewers to use the Common CV, which is a bespoke Canadian system. Other internationally accepted systems (e.g., ORCID), or simply using an informed scientific review officer to undertake a literature search, should be considered. The Panel believes international reviewers are essential in a relatively small system; they break any sense of positive or negative bias that is likely to exist when many reviewers in one year are likely applicants the next. Further they enhance the quality assurance for CIHR and the research community more broadly. Indeed it is worth noting that some systems in smaller countries (e.g., Ireland and Finland) have moved entirely to using international reviewers to avoid these biases. The need for some domestic reviewers in some contexts (e.g., social sector research; Indigenous research) is fully accepted, but it is an overstated claim to state that entirely domestic reviewers are required to review such grants. Further, international reviewers would expand the horizons of Canada's research uptake and impact.

While algorithm-based matching sounded attractive, there is a limit at this stage of artificial intelligence to what it can possibly achieve and reviewer selection must be primarily informed by scientific human judgement. While the Panel understands that new approaches are being implemented at the recommendations of the post-July 13th Peer Review Working Group, the Panel is of the firm belief that the absence of Ph.D.-trained scientific review officers remains a gap in the CIHR system - this should be addressed urgently. Such staff are needed at the working level at CIHR to engage with and support the community, reviewers, and the Competitions Chairs around reviewer assignment and monitoring of review quality. The Panel believes that assignment of reviewers should be conducted by scientific review officers at CIHR in collaboration where necessary with competition chairs. They may use many sources to identify potential reviewers including: matching programs, links with other national funding agencies (especially in the humanities and social sciences), literature searches, and prior experience of reviewers.

The College of Reviewers is a laudable idea and the Panel commends the work of Dr. Paul Kubes, the Executive Chair, and other College Chairs in working with CIHR to establish it. It is the belief of the Panel that the delayed implementation of the College of Reviewers by CIHR further compounded the technological challenges with reviewer matching and assignment. It was not ready when the reforms were implemented and remains in the earlier stages of development.

Competition Chairs should be selected on their experience and could be international. It is common practice internationally for people doing significant work for a funding agency to be remunerated financially for their contributions whether they are domestic or international.

Training of reviewers

There were also comments made regarding the value, particularly to the training of early career researchers, of allowing observation of grant review processes. If this were to be broadly implemented by CIHR, it would need to be with the knowledge that there is evidence that those given observer status are significantly advantaged for future applications. Given the small number of observers possible from a large potential applicant pool this creates a bias.

Regardless of whether or not observation of grant review is implemented, training of reviewers requires much more definition by CIHR. CIHR currently provides guidance on aims, procedures, and roles. The Panel believes that the training available in unconscious bias is essential and should be required. However we think that Universities and other institutions should take a more direct role in the actual training of early career scientists in paper and grant review.

The Panel believes that independent observation of grant review processes may serve an important function in trust building and governance and supports the idea that some reporting on the peer review process (e.g., reports from Chairs; assessment of quality and satisfaction of the process by reviewers and/or applicants) has value. The Panel thinks that Governing Council members, who have the ultimate accountability for grant review at CIHR and College of Reviewer Chairs, should be given the opportunity to observe but not participate in the proposed second phase of grant review.

Equity

The Panel heard considerable concern from stakeholders about equity, however rarely with a definition of what was meant by the term. Depending on the definition, equity could be reflective of a strategic issue (i.e., balance of funding across different types of research and/or researchers based on pillar, stage of career, gender) or reflect issues of unconscious bias by panel members. CIHR needs to get input from stakeholders and then clearly state and regularly review its equity objectives. We heard about the following potential equity issues:

- Stage of career: Depending on the outcomes of consultation, CIHR may need to consider different programs to meet the needs of early career researchers (ECRs). From the data we saw, if equity is looked at purely through the lens of absolute vs. relative success rates, then there is an issue for ECRs. However, if you look at the grant review process through the lens of the already protected funding envelope that exists for ECRs in both the Foundation and Project Grant Programs, there is no evidence of bias in success rates by career stage cohort. If you look through the lens of generally how you would expect ECRs to fare in a highly competitive and resource-constrained funding system, then one would anticipate highest success rates from experienced researchers - which is the case. Each perspective gives a different conclusion about equity issues and the Panel saw no systematic evidence of bias in that latter regard. We also note that the Government responded with an ongoing commitment of $30M of unfettered funds which Governing Council directed to funding ECRs when this was identified as a potential issue in the Project Grant Program. Further, for the Foundation Grant Program, CIHR has decided that, in order to ensure ECRs are being treated fairly, approximately 50% of the applications from the new/early career investigators cohort will move from the preliminary to the definitive phase of the competition. CIHR will also ensure that it awards a minimum of 15% of grants to ECRs. Regardless of CIHR's role in addressing equity issues for ECRs, institutions have a role to play in building capacity among ECRs for high quality grant applications.

- Gender: The Panel noted that there had been evidence of a gender bias in the preliminary stage review of Foundation Grants. It is the understanding of the Panel that these issues were identified and have been responded to by CIHR by ensuring that, regardless of career stage, the proportion of female applicants moving forward from the preliminary stage to full application will equal the proportion of female applicants to the competition, if necessary.Footnote 14 This is a matter that also can be further addressed through training reviewers regarding unconscious bias. This has been done with some success in Sweden for example, and CIHR has also implemented a training module to address this.Footnote 15

Pillars of Research, basic and applied research and Social Sciences/Humanities Research: 17 years after the creation of CIHR and its broadened mandate, some legacy tensions regarding how it supports the four pillars of research remain evident. It may be that this is confounded with different perceptions of the needed balance between basic (which can be part of any pillar) and applied research. Basic research is critical but in recent years this part of the community feels that they have not received sufficient support given the targeted nature of any increases in federal government funding. CIHR does not seem to have a transparent strategy for allocating funding across these different domains but this may be being partially addressed through the priority-driven funding at CIHR, which was beyond the scope of this review.

The Panel acknowledges the challenges to the social sciences and humanities health-related research community in the different review cultures at SSHRC and CIHR and the different lenses that health scientists and social scientists/humanities researchers may bring to research questions. Further, the performance assessment of such researchers is very different to that traditionally used in biomedical and clinical research.Footnote 16 This issue seems to have become even more challenging post-2009 when SSHRC decided to no longer deem health-related applications eligible for its programs. The Panel feels that appropriate review of applications can be addressed by greater collaboration with SSHRC over funding and the review process, and greater use of international reviewers. Many other international agencies have engaged successfully in joint review between partner research agencies.

- Language: The need to ensure fair review of French language applications was acknowledged and raised in stakeholder reports received by the Panel. The Panel feels that much more widespread use of francophone reviewers from outside of Canada may assist.

- Indigenous research: Though beyond the scope of this review, the Panel acknowledges the context of Indigenous Health research and the importance of ensuring fair, appropriate, and context-sensitive review of related applications.

Based on how equity and equity objectives are defined by CIHR, a monitoring strategy should be put in place to track equity issues within the funding system and the data arising should be fully available and accessible.

Possible Options for CIHR Grant Review Going Forward

Given that any grant review design at a macro-level has many constraints and creates practical issues at an operational level, it is not for this Panel to prescribe the review process. As stated above, there are many considerations that go into design at each of the 5 or 6 stages of a grant allocation process. The Panel believes that fundamental principles need to be built into any design, and from these principles a general plan can be derived appropriate to the detailed logistical considerations that were beyond the Panel's brief.

The Panel was unanimous in concluding that a world-class system could be evolved from the important and innovative design principles that lay at the heart of the redesign that was attempted by CIHR. It is desirable to continue to follow this general path not only because of its rationale but because it can be incrementally developed and implemented progressively without great disruption. We would not recommend further reversion to the pre-2012 process that had real and perceived limitations.

We agree that Foundation grants should initiate with a preliminary application to be assessed virtually using criteria as at present and then a selected number invited to present a full application. Project grants should be initiated by submission of a full application.

While we note that in the concerns that emerged in the past two years there were a number of specific technical issues raised as to the precise details of the application forms (such as page length); the Panel did not consider these further as it appears a consensus has been achieved.

The Panel recommends the following general schema for full applications to either the Foundation or Project Grant Programs.

Stage 1: Initial Review

This Panel concluded that face-to-face meetings are not necessary in the first stage review prior to triage.

Applications should be assigned a minimum of five reviewers per application. While these should include reviewers with well-matched expertise, CIHR also needs to consider the necessary mix of reviewers needed to adequately review (e.g., subject matter expertise, methods and in some cases statistical dimensions, and end users of research) the grant. It is possible that multidisciplinary grants may need more than five reviewers in Stage 1.

We believe that it would be advantageous if around half the reviewers were not Canadian, particularly in those areas of closest conceptual, subject matter and methodological matching to the research proposed. We note that issues of alleged cronyism and perceived bias would be addressed by such a policy and that this is a common practice for smaller scientific nations.

Scientific review officers should be introduced at CIHR. Assignment of reviewers to applications should be done by a scientific staff member at CIHR, ideally with some relevant expertise and using appropriate supporting tools (e.g., literature search, database, matching algorithm, experience). The final selection of reviewers might be subject to approval of a competition chair.

We consider that the design feature of selecting reviewers specifically for each grant rather than using standing committee(s) is highly desirable. We were impressed with the innovation of selecting reviewers so they had ~10 grants to review and then using a ranking algorithm to integrate scores while maintaining a system of individual selection of reviewers per grant. This has the net effects of meaning there is no stable and predictable committee and that each grant gets matched to relevant reviewers. Thus the majority of grants have an individual selection of reviewers and virtually no two grants have an identical mix. We would hope that this innovative system is maintained. The ranking algorithm appears to be appropriate to handle this approach.

In the case of international reviewers we see no reason that they could not also be asked to review a cluster of the same number so that their ranking has the same validity but realistically this would require paying international reviewers for a substantive effort. Other international agencies do so.

Interaction between reviewers at this first stage is not universal in other systems but it may have some value given our recommendations about the mix of reviewers. The Panel believes that all comments made through asynchronous reviews (if used) should go forward to those reviewing at a later stage in the process.

These reviews can be virtual – in Stage 1 these can be totally independent or subject to asynchronous electronic sharing of any preliminary comments (although most systems would not do that at this stage), and then final scoring.

A supplement to the reviewer ranking would be to add a qualitative ranking as is done in some jurisdictions. This greatly assists the triage process.

- Highly fundable

- Possible fundable

- Would need significant improvement for any consideration

- Do not fund

The criteria for scoring and ranking need to be clear, as is currently the case.Footnote 17 The Panel believes research excellence should be the primary consideration at this stage.

Stage 2: Triage

Triage is essential given the volume of applications and has become normal practice in many systems. We see no reason to change the basic nature of the ranking system currently used in the initial phase and were impressed with the data showing its fidelity at the high and low ends of grant quality. The level of triage needs to be realistic in that there seems to be little value in taking to further evaluation an excessive number of grants relative to funding available. A rational ratio would seem to be about twice the number of grants to the number likely to be funded. This should be based on the rankings subject to the majority of referees placing the grant in categories 1 or 2 above.

Stage 3: Feedback and Applicant Response